This will make sense in a minute.

This will make sense in a minute.

I spent the first half of 2025 reading about AI. Running experiments. Building proofs of concept. Teaching others. I fully grasped the promise of large language models. I could explain transformers at a whiteboard. I knew the APIs inside and out.

And yet, I wasn’t actually using AI in my daily work.

Knowing Without Doing

As a Google engineer, I had early exposure to generative AI. We were running experiments and sharing learnings while many were still figuring out what ChatGPT even was. But there’s a peculiar trap that comes with early access: you can mistake understanding for doing.

I was “too busy” with my regular work to actually implement anything. Sound familiar?

In early 2025, LLMs were tools, not products. They lived behind REST APIs or sat in web interfaces. They weren’t integrated into workflows. The products that packaged these capabilities into something usable were still new—and many people hadn’t been introduced to them yet. Companies and creators were still figuring out how to tell the story.

For someone like me—a backend engineer who knows how to call an API—that created a strange mental barrier. I could use them, theoretically. But building that bridge from “API I can call” to “thing that helps me work” felt like unnecessary effort.

A Café, a MacBook, and Five Weeks Off

Everything changed during my summer vacation. Five weeks off (yes, Sweden is generous with vacation). I’d just bought myself a new laptop.

Day one: I dropped my wife off in the city—she had “errands” to run, and like a good husband, I stay out of the way when appropriate. I took my shiny new MacBook to a café, found a sunny spot outside, and decided to finally see what this “vibe coding” thing was all about.

Vibe coding—if you haven’t encountered the term—is essentially describing what you want to build in natural language and letting AI write the code. Less typing, more vibing. I was skeptical. I’ve been writing code for years. I review code daily. I build complex distributed systems. Could an AI really help me?

I fired up Replit. Not because I needed a web IDE—I wanted to understand what the average user experienced. That productification of AI that I hadn’t seen before. I’m a backend guy; I know the APIs. But I needed to see a product.

They had just released their new “agent” mode: more autonomy, more reasoning, less hand-holding.

The Kids Need to Read

Getting started was easy—I was immediately dropped into what looked like a purpose-built IDE with a chatbot ready to go. Now comes the hard part: what do I actually build?

My sons’ teachers had mentioned it would be great if the kids read every day during summer holiday. And since I’m too much of an engineer to just take them to the bookstore, buy some books, and sit down with them to read… I decided to build a web app where they could log in and create their own stories with AI.

I did what anyone with a half-baked idea would do: write a semi-good prompt. But I was too prescriptive about how to build it and way too vague about what I actually wanted—the goals, the user journey, the experience.

The AI was smart. It understood what I was really after and ignored much of my overly technical “how.” It started building a React app with a Postgres database. Four minutes of scaffolding later, my jaw hit the floor.

This looked… amazing? Surely it doesn’t actually work?

Four Minutes to Jaw on Floor

It worked.

The platform’s harness and blueprints were smart enough to bootstrap almost everything—ORM, two-way bindings, the works. The app was functional immediately. I only had to add a Gemini API key.

The StoryQuest navigation bar—complete with star currency and user profile. Yes, that’s 90 stars and 4 stories completed.

The StoryQuest navigation bar—complete with star currency and user profile. Yes, that’s 90 stars and 4 stories completed.

Once that was done, I proceeded to chat-code my way through feature after feature:

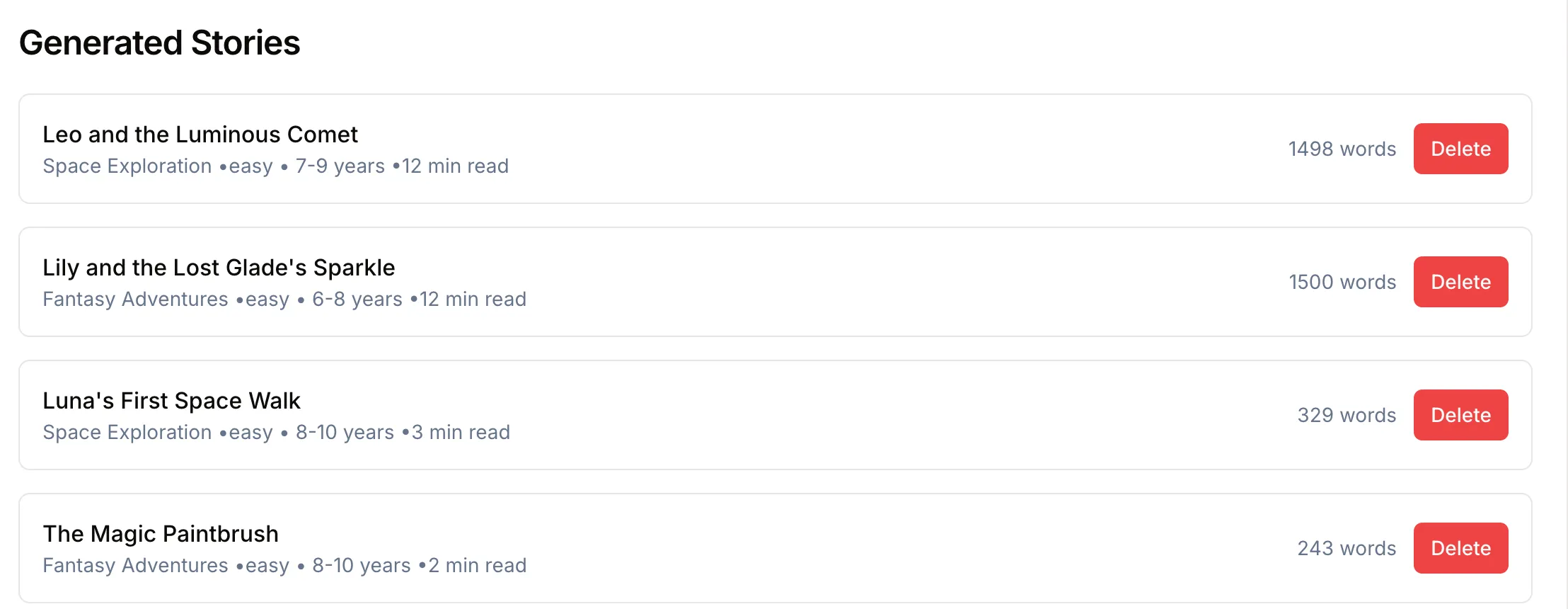

- Story categories

- Reading comprehension quizzes

- A star system (currency for the kids)

- The ability to create their own stories by spending stars earned from reading

AI-generated stories ready to read.

AI-generated stories ready to read.

The features kept snowballing. Every time I finished one thing, I thought of something else. “What if the quizzes gave different amounts of stars based on difficulty?” Done. “What about AI-generated cover art for each story?” Done.

My wife taps me on the shoulder. “Errands are done. Time to go home.”

“But… I’m not done yet! Buy something at the café and sit with me—you must be thirsty or hungry?”

I just wanted to keep going. I was having fun.

They Actually Used It

It had built-in hosting, so getting everything live was trivial. I added Firebase Auth so the kids could log in, deployed the app, and… that was it. Live.

The kids actually used it all summer. They’d compete to earn stars, create ridiculous stories, and take the comprehension quizzes. My youngest got genuinely upset when I accidentally broke something during an update.

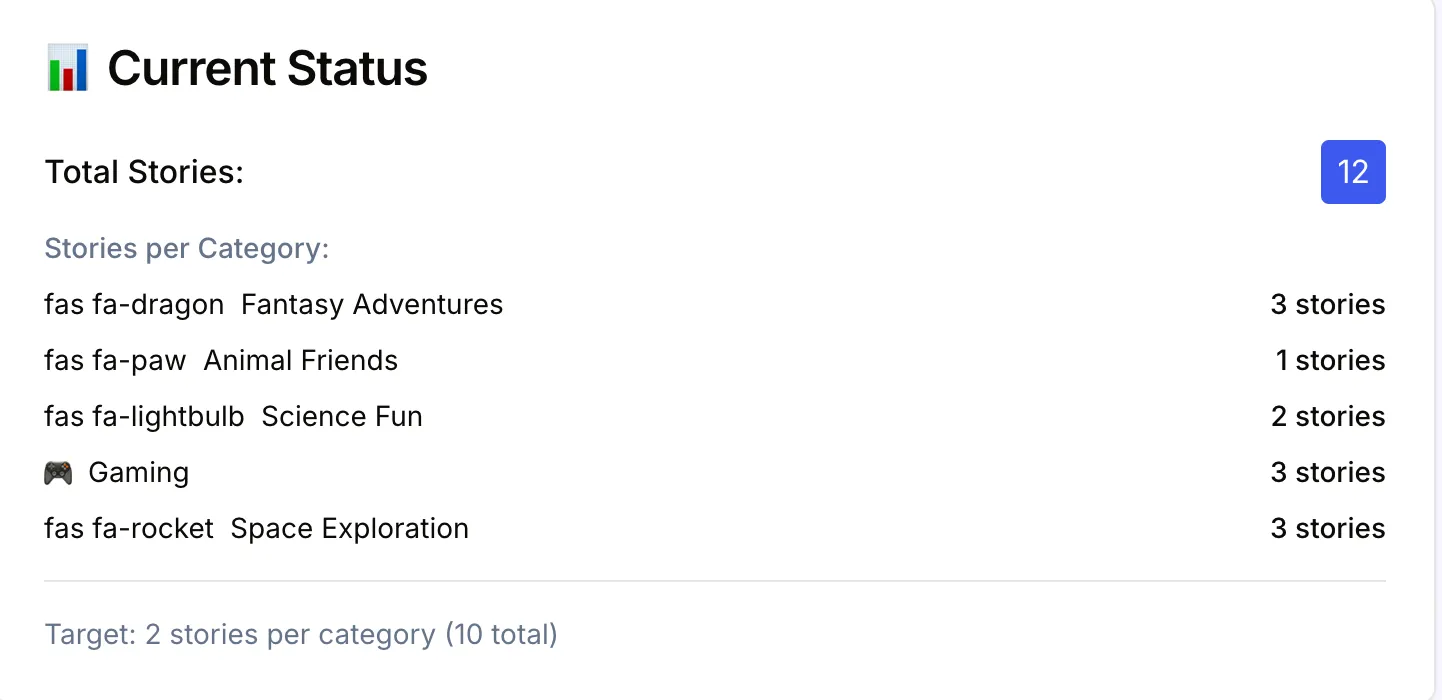

Of course, they also had opinions. My carefully curated categories—“Fantasy Adventures,” “Science Fun”—were deemed… a bit lame. “Dad, can we have gaming stories?” And thus, the Gaming category was born.

The Gaming category.

Kid-approved cover art.

12 stories across 5 categories. Not bad for a café afternoon.

12 stories across 5 categories. Not bad for a café afternoon.

The app is still running. It’s not pretty code—it’s vibe-coded chaos with Gemini calls everywhere—but it works. And my kids used it.

Get on the Bike

Here’s what I learned that sunny afternoon: no amount of reading the manual beats just doing it.

You can’t learn to ride a bike by reading about it. You have to get on, fall over, scrape your knee, get up, and try again. The documentation won’t save you. The API reference won’t save you. You have to feel the wobble and figure out how to balance.

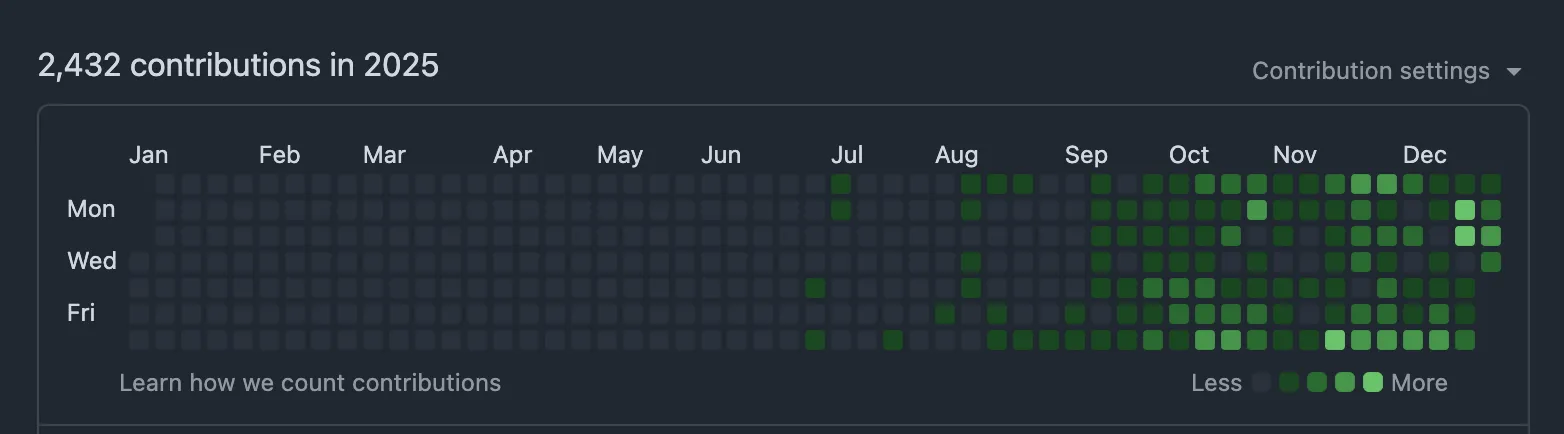

I spent months understanding AI. It took one afternoon of actually building something to get it.

That summer kickstarted a completely different relationship with AI tools—one where I stopped treating them as interesting technology to understand and started treating them as… well, tools. Things that help me work.

My GitHub contributions in 2025. Spot when I got on the bike.

My GitHub contributions in 2025. Spot when I got on the bike.

But the story doesn’t end here. That café afternoon showed me what was possible—but I was still just chatting with an AI in a browser. The platform did the heavy lifting for me. What if I could build my own?

The real shift came when I discovered tools like Claude Code and Gemini CLI, learned the patterns behind good instruction files, and stumbled into the world of tool calling and MCP. I started to understand that the LLM is just the engine—what you really need is the car.

That’s when I started building the car.

That’s Part 2.

This is Part 1 of a series about what I learned using AI in 2025. Follow me on LinkedIn or to catch Part 2.